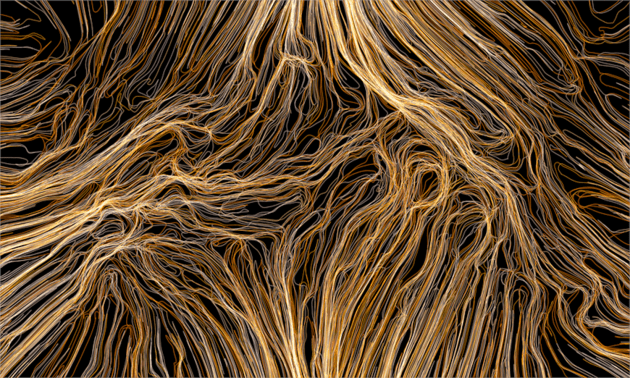

Volatile Formation

Roland Snooks – Log 25, Summer 2012

Volatility is a critical condition for design. Within the intensive processes of formation that underlie complex phenomena, those which self-organize and are capable of catastrophic change, it is the volatile nature of the system that is generative. From these far-from-equilibrium conditions new forms of order emerge, generating strange behaviors, characteristics, and traits – conditions potent with architectural possibility.

Computation is a necessary accomplice in working with complex systems, but in architecture computational methods have been adopted that privilege certainty over open-ended processes. This adoption is based on a set of false assumptions regarding the pseudo-objective nature of computational design. These systems of parametric variation and optimization are complicit in the automation of design, marginalizing risk, and foregrounding stability and equilibrium. I would argue instead for complex systems of formation that operate through the volatile interaction of algorithmic behaviors and engage the speculative potential of computational process.

Behavioral formation is posited as nonlinear algorithmic design methodology that seeds specific architectural intent within the local interactions of multi-agent systems. Within the processes of behavioral formation, two modes of operation are interrogated that set up resilient design models to negotiate the internal resistance of local and global procedures. The first is centered on the inconsistent feedback of algorithmic systems and explicit decisions – a form of messy computation. The second is an argument for heuristic role for structural feedback within multi-agent algorithmic design.

The instability of a system enables the emergence of an order that is radically different from its initial conditions. Complex systems with the capacity for catastrophic change, such as Stephen Wolfgram’s cellular automata rule 110, operate on the edge of chaos and are potent with generative capacities. Within these globally volatile conditions, new characteristics and locally stable situations emerge from a constant state of flux, resisting periodic or predictable order. Wolfram points out that emergence is an unquantifiable phenomenon; the categorization or evaluation of complexity is qualitative and subjective. Similarly, the cusps and bifurcation of chaotic systems map catastrophic change within internally coherent systems. Manuel de Landa discusses these states in terms of thermodynamics. “It is only in these far-from-equilibrium conditions, only in this singular zone of intensity, that difference-driven morphogenesis comes into its own, and that matter becomes an active material agent, one which does not need form to come and impose itself from the outside.” It is the emergent capacities of these complex systems hovering between order and chaos that offer significant generative potential for architecture.

The underlying reliance of complex systems on iteration and feedback has rendered computation an indispensable tool in the generation and simulation of complexity. Architects, however, have largely relegated computation to the service of explicit control. Within architecture, computational design can be described through several broad categories: parametric modeling, optimization, form-finding, and generative algorithms. The first three categories are inherently based on stability, or the search for equilibrium, while the latter exposes the potential of open-ended complex systems. The necessity for risk and indeterminacy in design is not reducible to the dichotomy of linear systems and nonlinear systems – although these are key protagonists – but more precisely defined by the opposition of stability and volatility.

Parametric, or associative, modeling describes a linear relationship between a parameter and geometric transformation, enabling the precise control and manipulation of flexible digital models. It is curious, then, that parametric design has been so enthusiastically embraced as a generative tool in contemporary architecture. Generative design can be described as designing the design process rather than the artifact. Parametric models are structured hierarchically, however, having direct, cascading, causal relationships – an obvious impediment to this description of generative design. The parametrics within these models – as the now ubiquitous sliders in software programs epitomize – confine the model to a known set of limits. So while parametric models enable a distribution of difference, this is not the difference that emerges from intensive processes, but rather a directly described, top-down, smooth gradient operating within a predefined range. Here, all possibility is already given within the starting condition.

Optimization routines search a discrete set of possible solutions for the maximization or minimization of a known conditions such as structural or environmental efficiency. The volatility of the search algorithms that underlie optimization processes range from incremental change to the stochastic operations of particle swarm optimization or genetic algorithms. The internal volatility of search algorithms has little influence on the volatility of the design process, however, as these algorithms are searching for a predetermined optimal position. These strategies presuppose that architecture can be objectively evaluated through predetermined criteria, which is effectively a straight-jacket to speculative design. Optimization rejects subjective evaluation in favor of a reductive approach. Qualitative nuances, references, complexity, richness, and experience of architecture are outside of numerically desirable criteria. While optimization is able to engage in highly volatile generative processes, it must operate as an accomplice rather than the driver of the process – conditioning volatile outcomes rather than attempting to generate them.

Physics-based form-finding is a nonlinear approach to optimization in which each element negotiates with the adjacent elements to equalize difference, minimizing energy within the entire system until an equilibrium position is reached. The nonlinear operations imply volatility, but form-finding techniques are inherently risk-adverse due to the stable nature of their formal characteristics and rapid convergence of equilibrium. The stable characteristics of curvature within a catenary chain model or of a minimal surface within a soap-film model are immediately recognizable, known, and indexical. Their precise character is known in advance and the application of these techniques can become a matter of style or cliché. Consequently, the stable process of selecting an algorithm, rather than the algorithm’s nonlinear operation, has the dominant influence over architecture form and character.

Volatility is predicated on resisting equilibrium. This resistance emerges from the negotiation of positive and negative feedback in maintaining a dynamic condition from which a system reorganizes and unique forms of order emerge. Given the relative speed or simplicity with which a form-finding algorithm reaches equilibrium and its stable characteristics, a volatile strategy is possible only if there is a radical topological reorganization. Without this, the computational design process is simply the application of a predefined formal character to an a-priori topology, since there is only one possible outcome to a minimal energy model for a given topology. The misunderstanding of this inherent stability as a form of objectivity is an unintended disguise for the desire for certainty – a retreat from risk and the subjectivity of design.

Generative algorithms that engage recursive and self-organizing procedures are critical to the generation of complexity within computational design. While the role of generative algorithms in architecture is diverse, the architectural relevance of algorithmic design is dependent on the ability to encode architectural intent within the operation of the algorithm. Typically, generative algorithms are simply deployed as templates for architecture – abstract formal generators operating on an appropriated logic, devoid of any recognition of the architectural problem or proposition. Likewise, the application of algorithmic processes to discrete elements like facades or surface patterns limits complex systems to playing a minor role in defining architecture, and confines nonlinear processes to a known set of architectural hierarchies.

If computational design provides the framework within which to rethink architecture through a nonlinear paradigm, then it is not enough to simply apply the image of complexity to the body of modernity. It is perhaps this tendency that contributes to the perception of algorithmic architecture as obsessed with formal and aesthetic concerns – a perception that is antithetical to the organizational logic of complex systems. While this logic implies the potential for designing the inherently organizational aspects of architecture such as program and structure, complex systems are equally adept at engaging with form – as the emergence of form can be understood as the organization of matter.

The emergence of a strange or unknown set of characteristics requires a willingness to experiment through the design of an algorithm rather than simply its selection. Algorithms with highly indexical and stable relationships to their formal characteristics do little to advance an open-ended design process; instead they reduce algorithmic design to an act of stylistic selection. The mimetic nature and safe connotations of beauty and optimization in the algorithms extracted from biology demonstrate a prevailing conservatism within contemporary computational architecture.

The emergence of a strange or unknown set of characteristic requires a willingness to experiment through the design of an algorithm rather than simply it selection. Algorithms with highly indexical and stable relationships to their formal characteristic do little to advance an open-ended design process; instead they reduce algorithmic design to an act of stylistic selection. The mimetic nature and safe connotations of beauty and optimization in the algorithms extracted from biology demonstrate a prevailing conservatism within contemporary computational architecture.

The computational design methodologies outlined above can be seen to be either inherently stable or volatile within a predictable range – seeking a known attractor and the stability of selected characteristics. The rapid adoption within contemporary architecture of computation techniques that privilege pseudo-objective criteria, known characteristics and direct control demonstrates a prevailing desire for stability and an unwillingness to engage risk and the highly volatile conditions that are required for something new to emerge. But rather than advocating highly volatile systems that resist authorship or that privilege the unrepeatable accident, what is needed is an alternative understanding of control and interaction between top-down and bottom-up processes.

The volatility of a design process is dependent upon the nature of the design intent and the abstraction between that intent and the designed artifact. The nature of authorship, or intent, within generative design can be categorized as either criteria or procedure. Designing to criteria is inherently stable, as the criteria constrain the possible realm of the artifact. In contrast, designing through procedure is inherently speculative, as it is concerned with the conditions of operation rather than conditioning the outcome. This necessary abstraction between the design intent and artifact enables an emergent outcome through the interaction of design processes.

The highly volatile model of behavioral formation is in opposition to the inherently stable computational models that have been adopted. This methodology is not intrinsically tied to formal or organizational characteristics that are simply selected, but instead is an experimental approach that generates emergent architectural order, organization, and characteristics.

Drawing from the logic of swarm intelligence and operating through multi-agent algorithms, behavioral formation functions by encoding simple architectural decisions within a distributed system of autonomous computational agents. The interaction of these agents and their local decision generates a self-organizing design intent, giving rise to a form of collective intelligence and emergent behavior at a global scale. This represents a shift from the explicit design of form and organization to the orchestration of intensive processes of formation through the design of the underlying behaviors of matter and geometry.

Self-organization within models of behavioral formation generates emergent characteristics in a manner not dissimilar to many of the nonlinear generative algorithms that are criticized here. What differentiates behavioral formation is the architectural nature of the rules from which the characteristics emerge: these are loaded with specific design intent. Behavioral formation is premised on the generation of architectural behaviors through the interaction of local design intentions. Original characteristics emerge through a design process based on architectural tendencies and behaviors rather than the adoption of misappropriation of stable characteristics and known criteria from biology or computer science.

Agents within systems of behavioral formation are encoded within the geometry of vectors, strands, and surfaces, responding to the local flow, density, proximity, and direction of geometry. Their responses are based either on specific architectural requirements or on more abstract experimental rules that are evaluated through the emergence of a macro-level order. These two models typically operate in parallel. The former strategy enables the negotiation between complex architectural problems that relate to the optimization of structure, program, form, and ornament. The latter is concerned with mining the generative potential of systems of formation in search of novel organizational and formal traits. Within both modes, emergent macro-level characteristics are coaxed out of the self-organization of the system.

In contrast to optimization algorithms, there are no a-priori criteria constraining behavioral formation. Instead, a subjective design sensibility emerges from orchestrating the interaction of many micro decisions and intentions. While design intent might be prescriptive at the level of the agent, these processes are never stable at the macro scale because the agent is oblivious to the mergence of the swarm. The behavioral formation logic of encoding agency within geometry avoids any generic mapping of known algorithms, and, as such, behavioral formation resists the indexical nature of appropriated algorithmic techniques.

The local operation of behavioral formation inherently limits the interaction with macro-level concerns such as structure or typology. Multi-agent models are premised on the condition of global ignorance; their exclusively local operation is critical to the emergence of complex order. In an attempt to overcome this limitation, two resilient models based on internal resistance are proposed: behavioral structural formation and messy computation. These strategies exploit the dirty negotiation between the opposing methodologies of generative design and top-down evaluation or control. They are constantly in an internal state of conflict, maintaining volatile and emergent characteristics while negotiating pragmatic and nonsystemic concerns.

Behavioral structural formation is a volatile generative strategy embedded with a rational set of structural rules. It operates as a negotiation between the local interactions of multi-agent models and the global evaluation of structural analysis. The multi-agent generative algorithm is encoded with design behaviors from which a proto-architectural form emerges. The flow of load through this form is continuously analyzed at the global level, with information about the system’s localized structural performance fed back to the individual agents. In response to local structural conditions, the design agents adapt their behavior based on heuristic structural rules design to resist load. This strategy enables the agents to respond to the local implication of global conditions within a continuous feedback loop.

This approach is not premised upon structural performance generating form; instead, the behavior of generative design agents is conditioned by structural information. The methodology can be described through a strand model. In the case of excessive deflection, for example, the strands separate vertically to generate greater structural depth, create more strands to dissipate load, and bundle to generate greater structural rigidity. These operations integrate structure as one of the agent’s many competing design behaviors. This integrated approach enables a highly volatile design process to resolve structural forces without imposing a predetermined structural schema or structurally post rationalizing architectural form.

Messy computation is a nonlinear and inconsistent process of negotiation between generative and explicit procedures. This requires a relentless, iterative torturing of the model – editing, extracting, manipulating, and returning the model to the volatile space of algorithmic formation. Messy computation involves constant feedback between algorithmic procedures and direct digital surface modeling, an attempt to maximize and hybridize the potential of each model of design.

Within this messy feedback, the algorithmic generation of form and organization becomes the input to explicit modeling process and vice versa. The generative and the explicit each inform the other within a manual feedback loop until a coherent or synthetic vocabulary emerges. The constant but inconsistent feedback slowly congeals into a coherent behavior and set of organizational and formal characteristics. While the algorithmic procedures are capable of generating highly emergent outcomes, the inability of multi-agent systems to sufficiently comprehend topology or enclosure is mediated by the explicit modeling of the designer. This interaction is both a shortcut for intuition and a mechanism for direct, subjective, and nonsystemic decisions. A nonlinear design approach such as messy computation necessitates the interaction of all of its constituent systems within a feedback loop – it operates as an ecology.

The inherent conflict between the local operation of behavioral formation and macro architectural intent generates a series of new problems, techniques, and opportunities that enable original organizations, forms, structures, and characteristics to emerge. The internal resistance of behavioral structural formation and messy computational strategies differentiate these methodologies from the direct application of algorithmic processes to normative hierarchies and explicit initial conditions. It is through the constant negotiation within these processes that design intuition and heuristic approaches operate. Through this volatile struggle, algorithmic strategies become deeply embedded within architectural design.

Volatile algorithmic design methodologies can be understood asp art of a broader contemporary interest in indeterminacy and nonlinearity that has been emerging since the advent of chaos and complexity theory. However, the argument for volatility is more than theoretical concern; it is a fundamental concern for the importance of subjectivity and the nature of risk within design.

Breathtaking pattern! Very interesting image

I’m writing to let you know what a exceptional experience my cousin’s daughter had browsing the blog. She came to find plenty of pieces, which included what it’s like to possess an incredible helping mindset to make many others without problems gain knowledge of specific complex things. You undoubtedly did more than people’s expected results. Thank you for presenting the valuable, safe, explanatory and in addition fun tips about the topic to Jane

Her head was throbbing, the air pressure putting strain on her already over-loaded brain.

His books have been translated widely and one of his novels

for children was highly commended for the Carnegie Medal.

But if you look past the famous Oxford Street shopping strip and

side lane boutiques you’ll realise there are two particularly important

open-air locations.

Great article.

Hi to every one, the contents present at this website are actually awesome for people knowledge, well,

keep up the nice work fellows.

Hello my family member! I want to say that this post is amazing, nice written and come with approximately all vital infos.

I would like to look extra posts like this .

I am really thankful to the owner of this site who has shared

this great post at here.

We absolutely love your blog and find many of your post’s to be exactly I’m

looking for. Would you offer guest writers to write content for you personally?

I wouldn’t mind writing a post or elaborating on some of the subjects you write with regards to here.

Again, awesome web log!

Can I simply just say what a relief to find someone that actually knows what they’re talking about online.

You definitely understand how to bring an issue

to light and make it important. More and more people need to check this out and understand

this side of the story. I was surprised that you aren’t more popular because you definitely have the

gift.

It takes a very small electrical force to turn on the light emitting

diodes. The recently developed LED grow lights aim to replace

them because of these main reasons:. Building a DIY LED grow panel is also a

great chance for a gardening enthusiast to try their hand at a

different type of project.

In the early 17th century, Phu Quoc was a desolate area where Vietnamese and Chinese immigrants earned their living from the highly coveted sea cucumbers.

We decided to pitch our tents at the nearest campsite, so we could

enjoy the views for another day. There are many different styles and types of equipment that can be purchased from your local grow shop or garden store.